- Campbell Arnold

- Mar 24

- 6 min read

“258,000 images from 100 centers, in 26 countries, across 5 continents… It’s extremely complete.”

— Julien Vidal, CEO of AZmed

Welcome to Radiology Access! Your biweekly newsletter on the people, research, and technology transforming global imaging access.

In this issue, we cover:

RadAccess Named to Top 40 Radiology Resources List

AZmed demonstrates scalable AI platform across 250K+ radiographs in 26 countries

Learning From Everything: How foundation models are changing the game in radiology

Can We End the 3D MRI Tradeoff

Resource Highlight: FREE Courses on MRI Safety in LMICs

RadAccess Named to Top 40 Radiology Resources List

We’re thrilled to share that RadAccess was named to The Imaging Wire’s Top 40 Radiology Resources list. It’s an honor, and one that reflects not just the readers who engage with and share the content, but also the researchers, clinicians, and companies doing the work that makes it worth covering in the first place. Thanks to everyone who reads, contributes, and builds in this space. If you’ve found RadAccess useful, consider forwarding it to someone else who might as well.

From Point Solutions to Platforms

AZmed demonstrates scalable AI platform across 250K+ radiographs in 26 countries.

Radiography is high-volume, high-variability, and still heavily dependent on human attention under ever increasing time pressure. Despite its central role in care, missed findings remain common, and workflows are increasingly strained. In many settings, radiologists spend less than 30 seconds per case just to keep pace with rising scan volumes. This challenge is only set to intensify as imaging demand grows globally, particularly in regions without sufficient radiologists or training programs.

To address this, a wave of companies has developed AI tools for X-ray detection and quantification. However, this has resulted in a proliferation of point solutions that are difficult to integrate and lack large-scale efficacy evidence. A recent Radiography study evaluated AZmed’s Rayvolve platform across more than 250,000 radiographs from 26 countries to determine whether a single, integrated AI suite can reliably handle the breadth of real-world X-ray interpretation. This is the largest study of its kind, and an early indicator whether AI can perform consistently for multiple clinical use cases in heterogeneous populations across the world.

Here is the methodology at a glance:

258,373 radiographs collected from 100 centers across 26 countries.

Multi-reader consensus ground truth with two independent reads per case (13 reader pool) and 3rd party adjudication.

Evaluation across four AI modules:

Trauma detection (fractures, dislocations, effusions)

Chest pathology detection

Automated MSK measurements

Pediatric bone age estimation

Metrics included AUC, sensitivity/specificity, and measurement error (MAE) with subgroup analyses.

In brief, the study found:

Near-ceiling performance in trauma detection: AUC ~98%, with sensitivity and specificity both ~96–97%.

Strong chest performance: AUC ~97.8%, maintaining robustness across geographic regions.

High measurement precision: ~1–2° and ~1 mm error (well within manual variability ranges).

Bone age estimation within ~6 months of ground truth with strong correlation.

Generalizability of performance across countries, scanners, and patient populations.

However, the bigger story isn’t accuracy, it’s integration. Radiology AI has long been fragmented into point solutions. This study suggests that a unified platform can deliver consistent performance across multiple clinical tasks without breaking under real-world variability. That’s a meaningful shift for both workflow design and deployment economics. What the field needs now are studies that quantify how these systems change radiologist behavior, reduce misses, and most critically improve an institution's report throughput.

Bottom line: AZmed’s 250K+ patient study showcased strong performances across 4 tools and nods toward a more unified approach to radiology AI deployment in global settings.

Learning From Everything

How foundation models are changing the game in radiology.

Today, most imaging AI is still task-specific, data-hungry, and frankly brittle, which makes it difficult to deploy across heterogeneous clinical environments virtually impossible to develop for rare diseases and low-data applications. However, the new wave of foundation models offers a promising alternative, such as the new open-source brain MRI model described in a recent Nature Neuroscience study.

The authors introduce BrainIAC (Brain Imaging Adaptive Core), a self-supervised foundation model pretrained on 48,965 brain MRIs spanning diverse scanners, institutions, and patient populations. Instead of relying on labeled data, the model learns generalizable representations from unlabeled scans, enabling it to then be adapted to a wide range of downstream tasks with minimal fine-tuning. Here is the approach they took to develop and evaluate the model:

Self-supervised pretraining on over 35 publicly available datasets, including 4 sequence types (T1, T2, FLAIR, and T1c)

Pretraining relied on contrastive learning and masked autoencoders

Adapted and evaluated across 7 downstream tasks, including:

Brain age prediction,

Cancer mutation subtype prediction

Survival prediction

Mild cognitive impairment classification

Stroke prediction

Sequence classification

Tumor segmentation

Benchmarked against both supervised models and existing pretrained algorithms

Tested in few-shot and low-data regimes

There are too many results to dig into given the large number of tasks evaluated, however there are some consistent high level takeaways that are clear:

Consistently outperformed traditional supervised learning, especially when data was limited

Strong generalization across tasks, including high-difficulty predictive tasks

Robust to real-world variability, including to different scanners, sites, and protocols

Better performance in few-shot settings, where data is extremely scarce

Looking towards the future, it’s clear that we are going to be able to leverage much more expansive datasets than our antiquated supervised learning approaches permitted. The amount of unlabeled data vastly outweighs our limited, manually annotated datasets. Foundation models offer us a way to truly leverage all the data at our disposal, while simultaneously offering more robustness and generalizability. Code for this project is open-source for non-commercial use.

Bottom line: With the advent of foundation models, we can develop better models that leverage the vast amount of scans sitting in institutional data lakes.

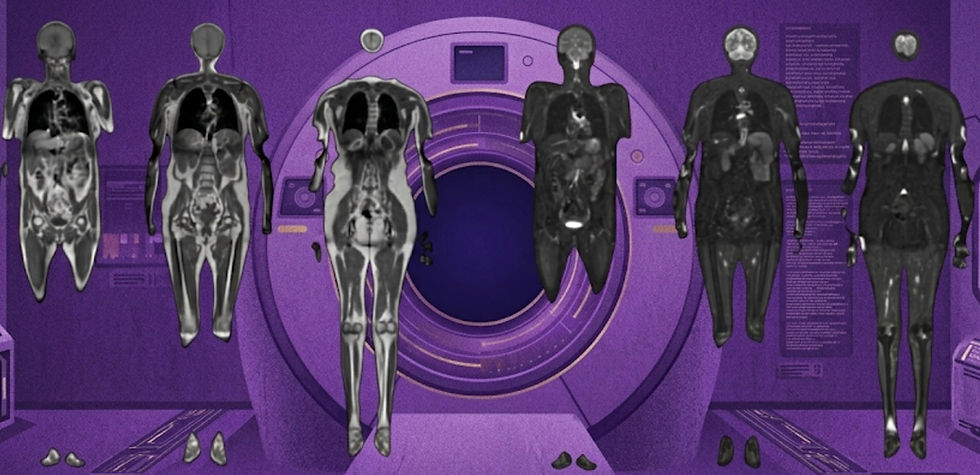

Can We End the 3D MRI Tradeoff?

How synthetic sequences can enable 3D acquisitions without workflow penalties.

What if a single 5-minute brain scan could deliver the same diagnostic value as a multi-sequence MRI exam, while also producing high-quality 3D sequences? Neuroradiology increasingly depends on 3D imaging for volumetrics and advanced, algorithm-enabled workflows, however longer scan times remain a major efficiency bottleneck for 3D acquisitions. An AJNR study last year evaluating SyntheticMR’s SynMR tool suggests that the tradeoff may soon be a thing of the past.

This prospective, multicenter, multireader study:

Compared synthetic vs conventional 3D T1-weighted and T2-weighted MRI across 189 patients

Included five blinded neuroradiologists evaluating 945 randomized reads

Assigned patients to 8 diagnostic classes, with additional assessments of image quality (1-5), artifact presence (6 categories), and legibility of 6 key brain structures

The goal was to establish whether synthetic images can match real-world diagnostic performance. They found:

No significant difference in diagnostic performance

Sensitivity: 66% (synthetic) vs 68% (conventional)

Specificity: 85% (synthetic) vs 85% (conventional)

Comparable image quality, maybe even slightly improved

T1w: 4.6 vs 4.5

T2w: 4.4 vs 4.2

High anatomical fidelity with >98% legibility of key structures

Synthetic T1w showed less artifacts, while T2w ratings were mixed

Consistent performance across readers and sites

Overall, synthetic 3D MRI matched conventional 3D imaging in diagnostic performance while enabling shorter, more efficient acquisitions. This has potential downstream benefits for workflow scalability and AI applications that are dependent on 3D imaging.

Bottom line: A 5-minute synthetic 3D MRI can match the diagnostic performance and image quality of conventional T1w and T2w brain MRI, and potentially enable downstream AI applications.

Resource Highlight: FREE Courses on MRI Safety in Low- and Middle-Income Settings

Looking to strengthen MRI safety knowledge, especially in low- and middle-income settings? This FREE course series hosted by ISMRM, ISMRT, and Rad-Aid offers six expert-led sessions, each paired with live Q&A discussions for practical insights. The course is hosted by Emre Kopanoglu (Cardiff University), Michael Steckner (MKS Consulting), Michael Hoff (UCSF), and Pradnya Mhatre (Emory). No worries if you missed a session, you can register here and watch prior videos as well! This is a great opportunity to build real-world expertise and support safer imaging worldwide.

Feedback

We’re eager to hear your thoughts as we continue to refine and improve RadAccess. Is there an article you expected to see but didn’t? Have suggestions for making the newsletter even better? Let us know! Reach out via email, LinkedIn, or X—we’d love to hear from you.

References

Disclaimer: There are no paid sponsors of this content. The opinions expressed are solely those of the newsletter authors, and do not necessarily reflect those of referenced works or companies.